There’s multiple studies that suggests that the psychological dependency is bidirectional where as pre-existing mental disorders can lead to cannabis dependency, cannabis dependency can lead to exacerbation of the pre-existing mental disorders, and excessive use can lead to trigging mental disorders you maybe genetically prone too and commonly psychosis. Psychosis has symptoms overlapping with schizophrenia, however you’re symptoms seem a bit extreme for Psychosis. Is there perhaps a history of schizophrenia &/or paranoid personality disorder in your family? If you don’t know, perhaps consider looking into it.

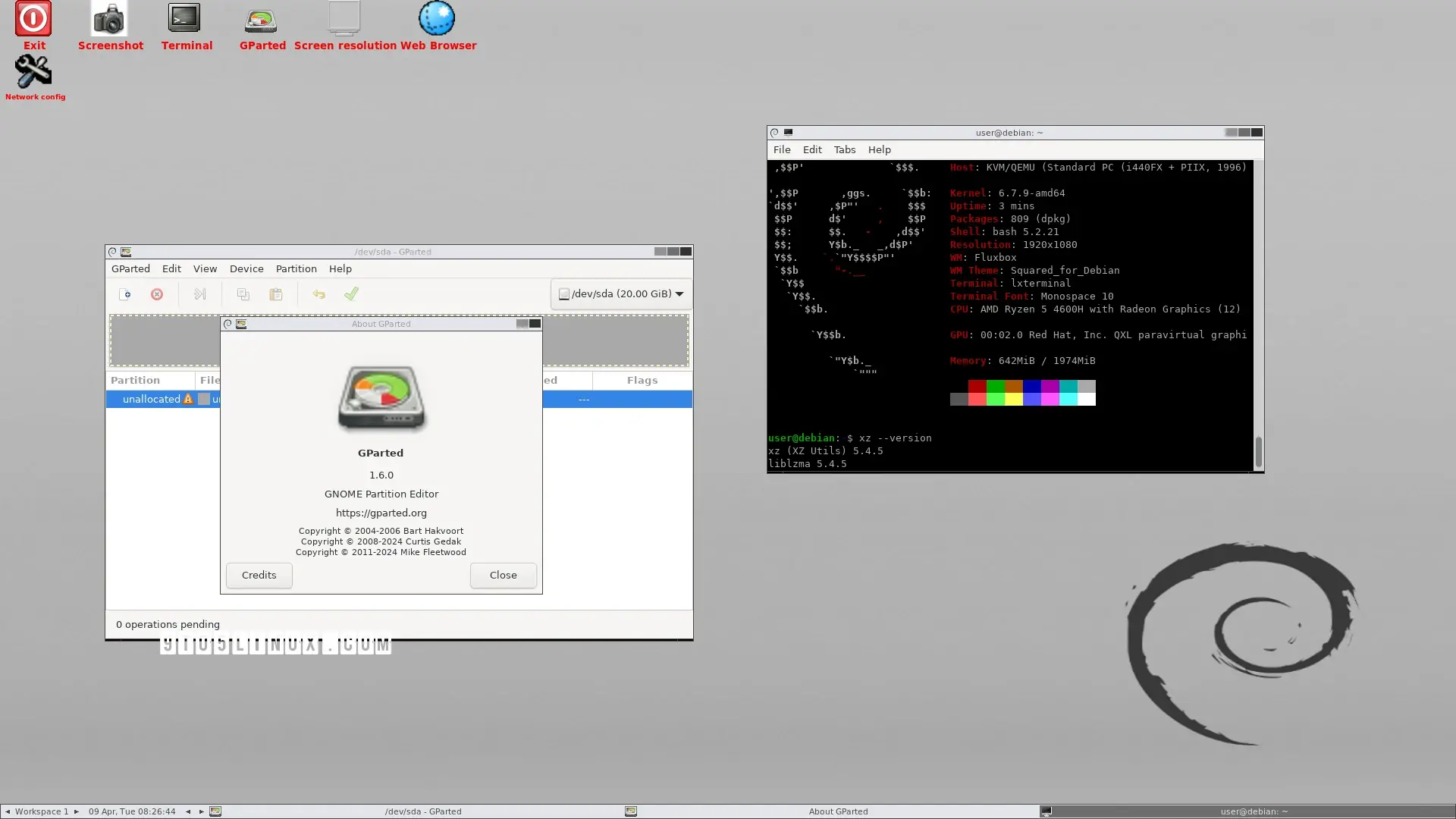

<gentoo joke>